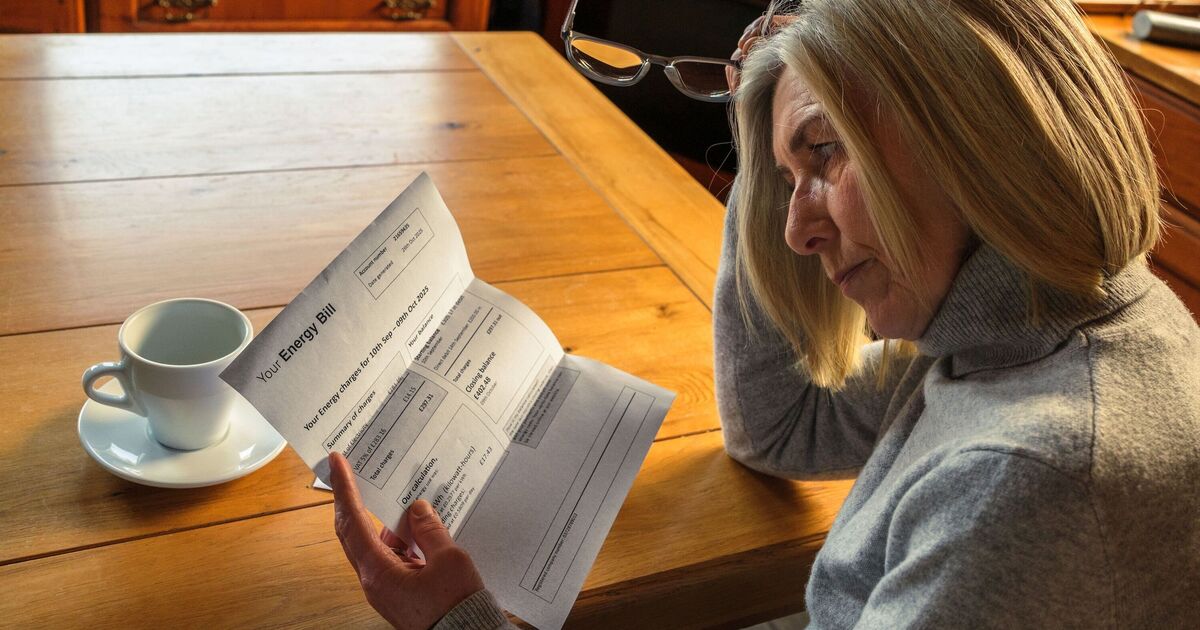

A growing number of investors are turning to AI to assist with crucial investment decisions, experts have warned, as the technology can communicate with the authority and sophistication of a Warren Buffett. The fundamental issue, they caution, is that while AI excels at sounding convincing, plausible-sounding information is not necessarily accurate information.

Most people, specialists advise, should restrict their use of AI to market research and as a sounding board, rather than relying on it to make actual investment and asset allocation decisions, stressing there should always be a ‘human in the loop‘.

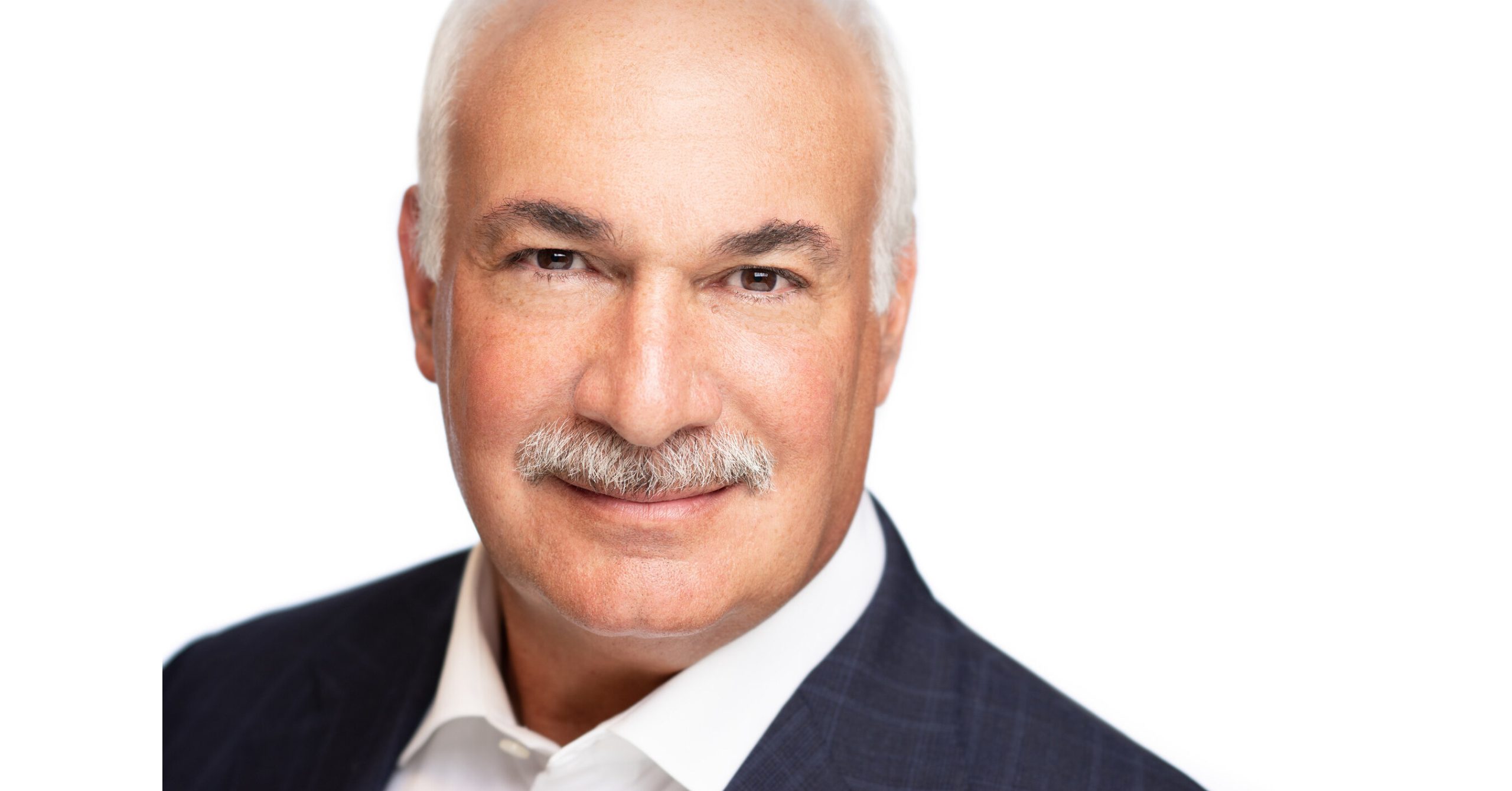

Rohit Parmar-Mistry, a data scientist and founder of AI consultancy Pattrn Data, said: “Using AI to decide where to invest is a dangerous path to go down, but it’s a path more and more people, in my experience, are choosing. AI can speak to you with the sophistication of legendary investors like Warren Buffett, but people need to remember that it is anything but.

“Yes, it can help you do your research and be a great sounding board, but it is not a substitute for judgment, suitability or regulated advice and is by no means the investment equivalent of the Holy Grail. It can explain diversification, compare asset allocations, model risk scenarios and stress test ideas, but a person’s investment portfolio is never just maths.

“Where they invest depends on their goals, tax position, time horizon, liquidity needs and risk tolerance when markets turn volatile.

“For most investors, AI is best used to challenge assumptions, improve understanding and help them ask better questions, not replace accountable advice outright.

“Industry insiders like to say the best way to use AI is always to have a ‘human in the loop’ and when it comes to investing we might adapt that to ‘adviser in the loop’.”

Meanwhile, AI expert and software engineer Colette Mason of Clever Clogs AI warned that AI agents were “exceptionally good at sounding plausible” but cautioned that “plausible information is not accurate information”.

She went on to say: “Using AI to sense-check what a regulated adviser has already built for you and fill in jargon knowledge gaps is genuinely useful. However, people need to understand that while a regulated adviser carries fiduciary duty and professional indemnity insurance, ChatGPT simply carries a disclaimer in tiny print: ‘AI can make mistakes’.

“It is imperative to remember that using AI is like pulling a fruit-machine lever, and there is a good chance you will be stung by the outcome.”