Researchers at the Allen Institute for AI and UC Berkeley have built EMO, a mixture-of-experts model that develops modular structures during pre-training. The model can be stripped down to a small fraction of its experts with barely any drop in performance.

Mixture-of-experts (MoE) architectures are now standard in language models like DeepSeek-V4 or Qwen3.5. They activate only a handful of experts per token, which lets them scale to hundreds of billions of parameters without blowing up compute costs. But the full model still has to sit in memory because different tokens within a task call on different experts. If you only want to do math or code, you can’t just load a slice of the model and call it a day.

According to the paper, that’s because experts in standard MoEs tend to latch onto shallow language patterns. They respond to things like prepositions, punctuation, or articles instead of higher-level domains like math or code. That makes it impossible to carve out a useful subset.

Document boundaries as a training signal

EMO tackles this with a simple trick. Instead of sorting training data into fixed domains like math or biology ahead of time—the way projects like BTX or Ai2’s own FlexOlmo do—the authors use document boundaries. Tokens within a document usually belong to the same domain.

EMO forces all tokens in a document to pick their active experts from a shared pool. The model decides which experts belong in that pool by averaging its router preferences across all tokens in a document and keeping the most frequently selected ones.

Two adjustments were needed to keep training stable. First, the authors stopped calculating load balancing, which aims to spread work evenly across experts, locally per training batch. Instead, they compute it globally across many documents. Otherwise, the two training goals would fight each other: one bundles tokens within a document, and the other spreads them across as many experts as possible.

Second, the researchers randomly vary the size of the document pool during training instead of fixing it. This teaches the model to work with expert subgroups of different sizes at inference time.

A quarter of the experts, one percent performance loss

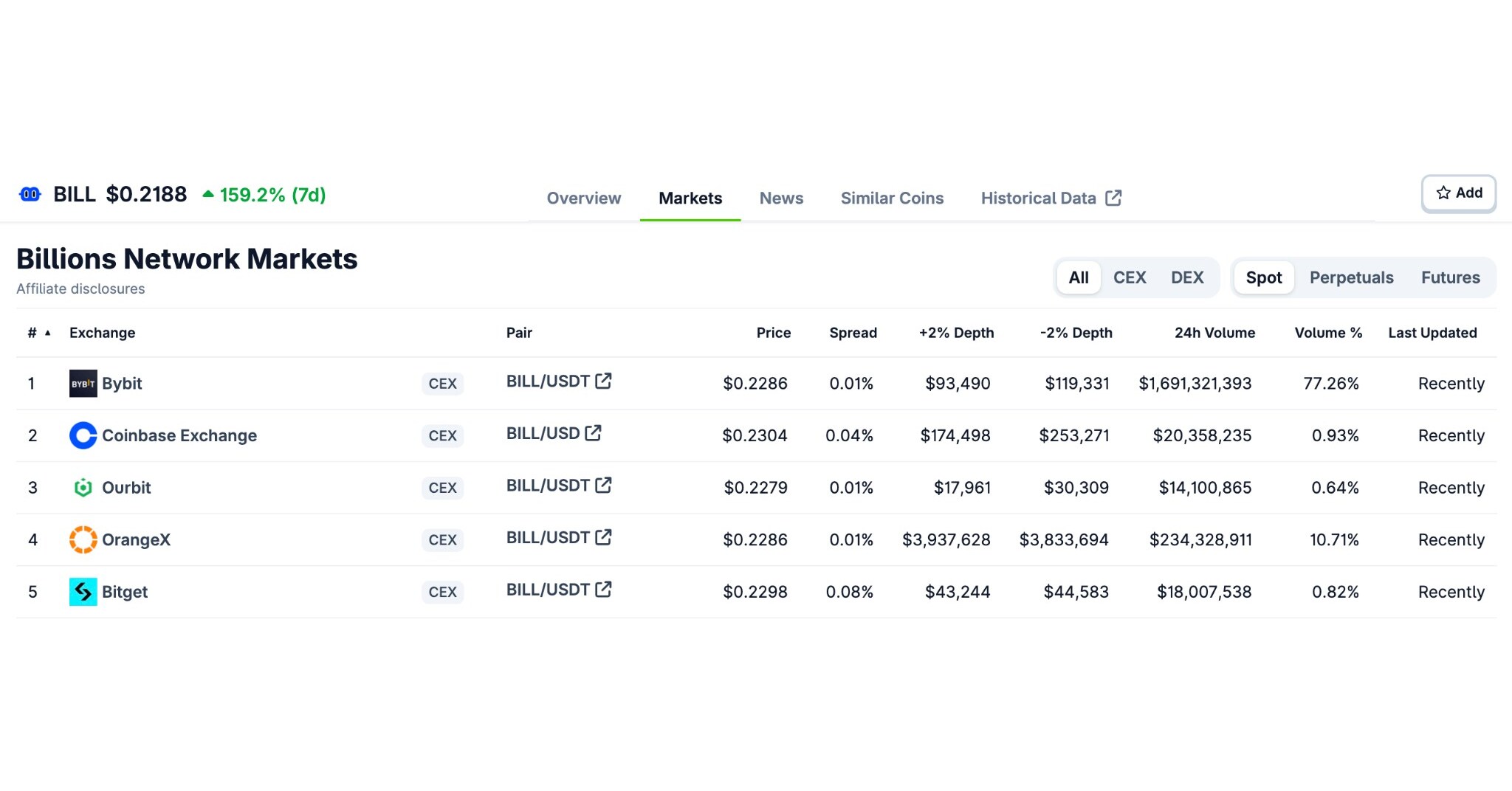

The team trained a MoE with 1 billion active and 14 billion total parameters with 128 experts, eight active per token, on 1 trillion tokens from the OLMoE pre-training corpus. As a full model, EMO matches an identically trained standard MoE. The authors say it beats OLMoE despite using five times more data.

The researchers then started removing experts to see how far they could go. With just 25 percent of them left (32 out of 128), EMO loses about one percentage point of absolute performance averaged across several benchmarks. At 12.5 percent (16 experts), the drop is around three points.

A standard MoE collapses in the same setup, losing 10 to 15 percentage points and, in some cases, falling below the level of a dense model with the same number of active parameters. On the math benchmark GSM8K, subsets with just 12.5 percent of the experts match full-model performance again after fine-tuning.

Finding the right experts doesn’t take much data, the authors say. A single few-shot example is enough to pick a subgroup that performs comparably to one selected on a full validation dataset. EMO works with both simple router-based selection and the more specialized Easy-EP method.

Experts learn topics, not punctuation

To understand what EMO actually learned, the researchers analyzed how the model distributes tokens to experts internally. For each token, they recorded the probability with which the router sends it to each expert. These patterns create a kind of fingerprint per token. They then grouped tokens with similar fingerprints into clusters.

The difference is clear-cut. In a standard MoE, expert clusters correspond to shallow linguistic categories: prepositions, proper names, definite articles. EMO’s clusters map to actual topics: health, medical and wellness, US politics and elections, film, music, TV & book reviews. Tokens from the same document converge on a single cluster in EMO; in a standard MoE, they scatter across many. An interactive visualization of the clusters is available online.

On a sample of 20 million documents from the WebOrganizer dataset with 24 human-assigned domain labels, the authors checked whether related domains also activate similar experts. In EMO, the patterns separate much more cleanly, especially in the model’s deeper layers. In standard MoE, they overlap more.

Use cases go beyond memory savings

The most obvious application is running models in memory-constrained settings where only domain-relevant experts get loaded. In a head-to-head comparison, EMO expert subgroups match or beat both a standard MoE with 32 experts and a dense model with eight active parameters, each trained from scratch.

The researchers also discuss fine-tuning models at runtime. A child-friendly app, for example, could switch off clusters that respond to spam, gambling, or adult content. In an initial test, the authors retrained a 32-expert subgroup of EMO and plugged it back into the 128-expert model. This improved the full model but didn’t reach the level of the standalone subgroup. EMO could also help with monitoring, since the experts make it visible which parts of the model a given input is using.

Ai2 is releasing the EMO model, a comparably trained standard MoE baseline, and the training code on Hugging Face and GitHub. The researchers have also published an interactive demo of the token activations. Open questions remain: how best to select and combine expert subgroups, how to retrain individual modules for specific tasks, and how the modular structure can be used to make models more interpretable.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive “AI Radar” frontier report six times a year, full archive access, and access to our comment section.